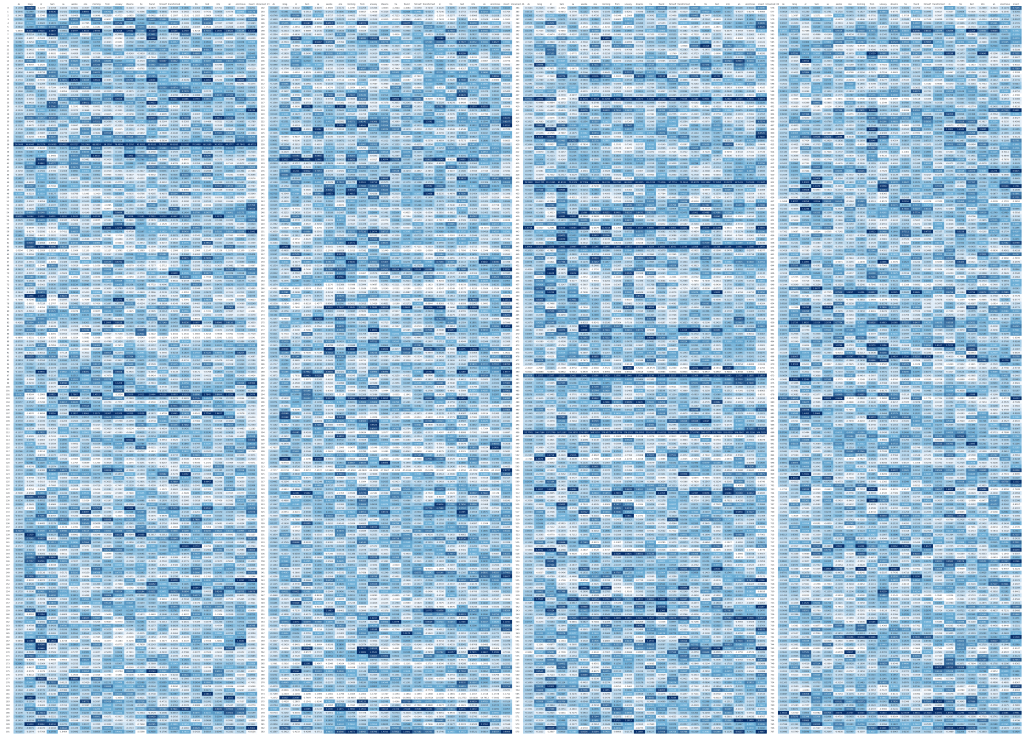

the big blue rectangle

as gregor samsa awoke one morning

from uneasy dreams

he found himself transformed

in his bed

into a gigantic insect

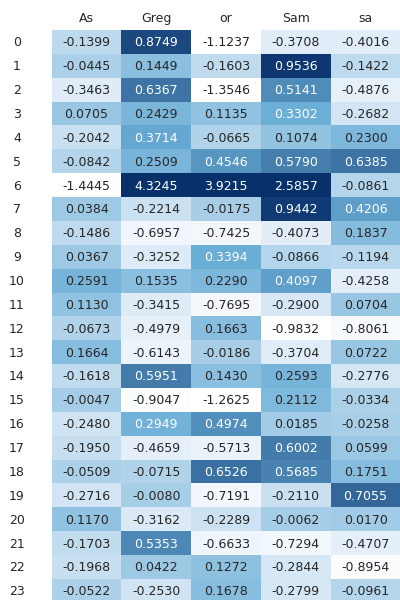

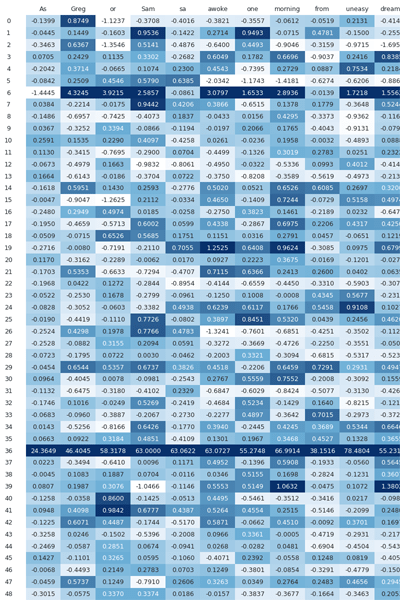

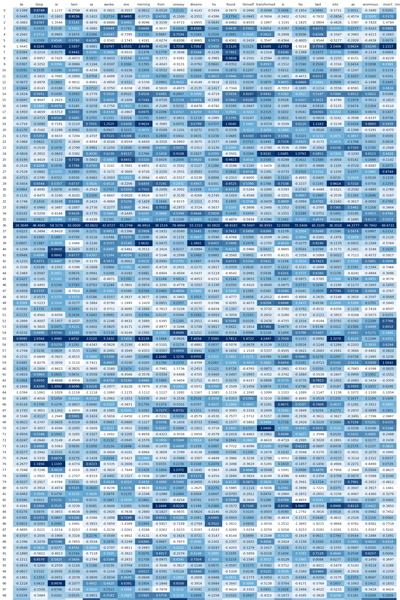

gpt-2 processes this sentence as a sequence of tokens.

each token enters the model as two 768-dimensional vectors.

across 12 layers, those values shift as tokens interact with one another.

by the end, each token arrives at a ‘final hidden state’

a last internal form from which the model continues its reading.

the big blue rectangle visualizes these final hidden states.

each token’s 768-dimensional vector is mapped to color values and arranged into a grid.

the image does not represent the sentence itself.

it represents how the model internally organizes the sentence.

import pandas as pd import dataframe_image as dfi import torchfrom transformers import GPT2Model, GPT2Tokenizerimport matplotlib.pyplot as pltmodel_name = "gpt2"tokenizer = GPT2Tokenizer.from_pretrained(model_name) model = GPT2Model.from_pretrained(model_name, output_attentions=True, output_hidden_states=True)model.eval()text = "As Gregor Samsa awoke one morning from uneasy dreams he found himself transformed in his bed into an enormous insect" inputs = tokenizer(text, return_tensors="pt")input_ids = inputs.input_ids tokens = tokenizer.convert_ids_to_tokens(input_ids[0])title = [] for i in range(len(tokens)): add = str(i) + ": " + tokens[i] title.append(add)with torch.no_grad(): outputs = model(**inputs)attentions = outputs.attentionsfinal_hidden_state = outputs.hidden_states[-1]hidden_matrix = final_hidden_state.squeeze(0) df = pd.DataFrame(hidden_matrix.numpy(), index = title) df_styled = df.style.set_properties(**{'max_cols': -1}) df.to_csv("C:/Users/musta/Desktop/final_hidden_states_with_labels.csv")print('done')as gregor samsa awoke one morning

from uneasy dreams

he found himself transformed

in his bed

into a gigantic insect